June 08, 2017

When a bug for an intermittent test failure needs attention, who should be contacted? Who is responsible for fixing that bug? For as long as I have been at Mozilla, I have heard people ask variations of this question, and I have never heard a clear answer.

There are at least two problematic approaches that are sometimes suggested:

- The test author: Many test authors are no longer active contributors. Even if they are still active at Mozilla, they may not have modified the test or worked on the associated project for years. Also, making test authors responsible for their tests in perpetuity may dissuade many contributors from writing tests at all!

- The last person to modify the test: Many failing tests have been modified recently, so the last person to modify the test may be well-informed about the test and may be in the best position to fix it. But recent changes may be trivial and tangential to the test. And if the test hasn’t been modified recently, this option may revert to the test author, or someone else who isn’t actively working in the area or is no longer familiar with the code.

There are at least two seemingly viable approaches:

- “You broke it, you fix it”: The person who authored the changeset that initiated the intermittent test failure must fix the intermittent test failure, or back out their change.

- The module owner for the module associated with the test is responsible for the test and must find someone to fix the intermittent test failure, or disable the test.

Let’s have a closer look at these options.

The “you broke it, you fix it” model is appealing because it is a continuation of a principle we accept whenever we check in code: If your change immediately breaks tests or is otherwise obviously faulty, you expect to have your change backed out unless you can provide an immediate fix. If your change causes an intermittent failure, why should it be treated differently? The sheriffs might not immediately associate the intermittent failure with your change, but with time, most frequent intermittent failures can be traced back to the associated changeset, by repeating the test on a range of changesets. Once this relationship between changeset and failure is determined, the changeset needs to be fixed or backed out.

A problem with “you broke it, you fix it” is that it is sometimes difficult and/or time-consuming to find the changeset that started the intermittent. The less frequent the intermittent, the more tests need to be backfilled and repeated before a statistically significant number of test passes can be accepted as evidence that the test is passing reliably. That takes time, test resources, etc.

Sometimes, even when that changeset is identified, it’s hard to see a connection between the change and the failing test. Was the test always faulty, but just happened to pass until a patch modified the timing or memory layout or something like that? That’s a possibility that always comes to mind when the connection between changeset and failing test is less than obvious.

Finally, if the changeset author is not invested in the test, or not familiar with the importance of the test, they may be more inclined to simply skip the test or mark it as failing.

The “module owner” approach is appealing because it reinforces the Mozilla module owner system: Tests are just code, and the code belongs to a module with a responsible owner. Practically, ‘mach file-info bugzilla-component <test-path>’ can quickly determine the bugzilla component, and nearly all bugzilla components now have triage owners (who are hopefully approved by the module owner and knowledgeable about the module).

Module and triage owners ought to be more familiar with the failing test and the features under test than others, especially people who normally work on other modules. They may have a greater interest in properly fixing a test than someone who has only come to the test because their changeset triggered an intermittent failure.

Also, intermittent failures are often indicative of faulty tests: A “good” test passes when the feature under test is working, and it fails when the feature is broken. An intermittently failing test suggests the test is not reliable, so the test’s module owner should be ultimately responsible for improving the test. (But sometimes the feature under test is unreliable, or is made unreliable by a fault in another feature or module.)

A risk I see with the module owner approach is that it potentially shifts responsibility away from those who are introducing problems: If my patch is good enough to avoid immediate backout, any intermittent test failures I cause in other people’s modules is no longer my concern.

As part of the Stockwell project, :jmaher and I have been using a hybrid approach to find developers to work on frequent intermittent test failure bugs. We regularly triage, using tools like OrangeFactor to identify the most troublesome intermittent failures and then try to find someone to work on those bugs. I often use a procedure like this:

- Does hg history show the test was modified just before it started failing? Ping the author of the patch that updated the test.

- Can I retrigger the test a reasonable number of times to track down the changeset associated with the start of the failures? Ping the changeset author.

- Does hg history indicate significant recent changes to the test by one person? Ask that person if they will look at the test, since they are familiar with it.

- If all else fails, ping the triage owner.

This triage procedure has been a great learning experience for me, and I think it has helped move lots of bugs toward resolution sooner, reducing the number of intermittent failures we all need to deal with, but this doesn’t seem like a sustainable mode of operation. Retriggering to find the regression can be especially time consuming and is sometimes not successful. We sometimes have 50 or more frequent intermittent failure bugs to deal with, we have limited time for triage, and while we are bisecting, the test is failing.

I’d much prefer a simple way of determining an owner for problematic intermittents…but I wonder if that’s realistic. While I am frustrated by the times I’ve tracked down a regressing changeset only to find that the author feels they are not responsible, I have also been delighted to find changeset authors who seem to immediately see the problem with their patch. Test authors sometimes step up with genuine concern for “their” test. And triage owners sometimes know, for instance, that a feature is obsolete and the test should be disabled. So there seems to be some value in all these approaches to finding an owner for intermittent failures…and none of the options are perfect.

When a bug for an intermittent test failure needs attention, who should be contacted? Who is responsible for fixing that bug? Sorry, no clear answer here either! Do you have a better answer? Let me know!

June 08, 2017 04:17 AM

May 31, 2017

Project Tofino was an attempt by Mozilla to develop a new web

browser. I think Mark Mayo, Senior Vice President of Firefox, described Tofino best:

[W]e’re working on browser prototypes that look and feel almost nothing like the current

Firefox… [we’re trying to solve] the kinds of problems people have that aren’t currently solved

by anybody’s browser product.

My colleague Richard Newman and I were responsible for designing and implementing a data storage

layer to back Tofino. The engineering organizations I’ve been a part of and have witnessed at

Mozilla do not have a culture of lessons learned, final reports, and post-mortems. I’m a

context-building history-oriented learner so I’d like to change that, one little bit, by providing

my biased account of the forces that resulted in us proposing Mentat to meet Tofino’s data storage needs.

Data storage in Tofino

Richard and I have a lot of experience storing data for browsers (which I’ll return to), and Richard

captured some of his thinking about storage in a blog post. All told, we wanted storage that:

- could handle existing Firefox data like bookmarks, history, and passwords;

- was easy to evolve to handle new Tofino data like page thumbnails, fulltext web content indexes,

and A/B testing results;

- performed well on an under-powered Windows tablet (the kind you might buy at a big-box store).

However, we also carry the scars from implementing multiple Firefox Sync clients. For those not

intimately familiar with browsers and Firefox, Sync is a distributed system that co-ordinates

browser data (bookmarks, history, passwords, etc) across your devices (Desktop, Android, iOS). Sync

in general is a very hard problem and Firefox Sync is a poor rendition of a sync solution . Richard and I knew

that Tofino would eventually evolve to become a Firefox Sync client (or grow a similar Sync

solution). So we also wanted storage that:

- would support an eventual Firefox Sync client.

To meet Tofino’s needs we proposed and are prototyping Project Mentat, a data store designed for embedded client applications. The

initial blog post announcing Mentat (née Datomish)

does a great job framing the data problems Tofino and Firefox face and how we’re trying to solve

them. In a nutshell, Mentat:

- is designed to be embedded into client applications;

- manages a strong schema and helps you evolve that schema over time;

- separates readers from writers, allowing to optimize critical queries;

- maintains a historical log, supporting three-way merges when syncing.

How we got to Mentat

Ancient history [2011-2016]

Any good origin story starts before the beginning, so in that spirit, let us start with ancient

history that predates Tofino. For simplicity, I’ll refer to myself and Richard Newman as the team

. Our experience led us to to design a storage system supporting (and eventually integrated

with) the Firefox Sync implementation. We got here in stages:

- Firefox for Desktop is the cautionary tale: this is what happens when storage and syncing are not integrated;

- Firefox for Android is the second system that learned some architectural lessons but "didn’t go far

enough"; and

- Firefox for iOS gets it "mostly right".

To set the scene, Richard inherited Firefox Sync, the Firefox for Desktop implementation. The

initial development was done in an add-on ; it used observer notifications to witness

changes relevant to Sync and track those changes in memory to propagate upstream. The code landed

with this architecture and the foundational decision to rely on observer notifications resulted in

significant issues :

- not all data sources produced the notifications required for Sync;

- notifications were lost at start-up, shutdown, and when Sync itself had bugs;

- the notification flow is inherently non-transactional .

Richard and I wrote the Firefox for Android Sync implementation. Richard owned Sync

and, eventually, the Firefox for Android storage implementation. Sync was built as a stand-alone

process from day one, factored out of the browser front-end via the Android ContentProvider

storage abstraction . Unfortunately, we didn’t appreciate how significant

transactional syncing would be, and we made Firefox for Android’s data storage "live": the browser

and Sync update the store non-transactionally — potentially at the same time — leading to subtle UI

conflicts and missed changes in Sync .

Richard also owned the Firefox for iOS storage and Firefox Sync implementations. The two systems

were designed to support each other from day one. The storage system is:

- entirely transactional and robust in the face of extreme behaviours;

- well factored, enabling performance improvements to key user interactions due to the single point

of responsibility for data;

- significantly less likely to lose or corrupt Sync data than the other implementations.

These performance improvements are most notable in the iOS top sites implementation. Top sites is

the panel of most frecent sites shown every time the user taps the URL bar to navigate

somewhere new. The iOS top sites panel displays instantly, since the underlying store keeps a

materialized view (a read-only computed cache) in memory and updates it efficiently independent of

the user interface.

Enter Tofino [April 2016]

Tofino was pitched as an entirely new browser product. We anticipated a data footprint similar to

Firefox for iOS, so we started to express the architectural lessons we had learned from the two

previous storage implementations using web technologies . The team started to build an

Electron/Node.js desktop application. We quickly discovered (or perhaps, realized) that a

client/server architecture is the best model for our web technology implementation. We started to

develop a User Agent service: an always available backend that stored browsing data for the user and

leveraged that data to help the user exploit the web. We settled into a familiar pattern,

recognizable to anyone who has built a Web App:

- UI -> transport -> UA service

- UI updates locally (optimistic update)

- UA service -> transport -> notifies UI to update locally (authoritative update)

where the transport was variously HTTP requests, Web Socket messages, or Electron’s IPC.

Tofino almost immediately pivoted to product and user research. Suddenly there was much more

interest in capturing event streams, and an immediate need to support rapid prototyping. We started

to build a Node.js service, backed by SQLite, to store events, materialize views, and update and

publish changes to clients.

Tofino product evolution [September 2016]

We quickly observed that adding data to our hand-written SQLite store required migrating the SQLite

store forward rapidly. Each data type we wanted to add required a back-and-forth between the

product owner, the front-end team, and the storage team. In response, we started to investigate

event stores that might do this work for us. Unfortunately, most offerings were:

- not embeddable (targeted the Java Virtual Machine (JVM), or were intended to scale horizontally in

the cloud); or

- more general than we were comfortable with (graph stores like Neo4J generally don’t query for time

ranges efficiently; document stores like Mongo generally don’t support strong schemas); or

- not general enough (key-value stores like LMDB and LevelDB don’t support strong schemas and

high-level queries); or

- not mature enough to ship to a market of Firefox’s size (side-projects like Cayley).

Evaluating this technical landscape, Richard and I found the ideas behind Cognitect’s Datomic most compelling. Datomic:

- is assertion (event) oriented;

- maintains a full transaction log;

- exposes an expressive, extendable schema;

- models row-oriented (relational) data efficiently;

- is flexible enough to model graph-oriented data.

Sadly, Datomic targets the JVM and we don’t see a path to shipping 200+ Mb of VM and database to

Firefox’s market. (In addition, Datomic is not open-source, making it an awkward cultural fit for Mozilla.)

Concurrently, the product and front-end teams wanted to rapidly prototype using tools like GraphQL.

Our research into GraphQL suggested that performance would be poor and very difficult to address,

but we see Datomic’s query and transact syntax as enabling experimentation by the front-end team

similar in spirit (if not expression) to GraphQL, and also possible to make performant.

To research the ideas by Datomic, we started to adapt Nikita Prokopov’s awesome DataScript, a Clojure{Script} Datomic-alike that transpiles to

JavaScript and can run in the browser and in Node.js. DataScript is an in-memory store, not

suitable for Firefox-sized work loads, and fundamentally synchronous (which is a big problem in the

highly concurrent JavaScript browser environment).

Datomish [July 2016]

The work to make DataScript asynchronous, backed by a persistent store (a flat file, IndexedDB,

SQLite) was close to a rewrite, but we still felt that Datomic’s model was compelling for our

requirements — particularly our emphasis on experimentation and managing change. So we started to

build a Clojure{Script} Datomic-alike. This short-lived prototype was named Datomish. Datomish:

- re-used key pieces of DataScript’s source code;

- persisted to SQLite;

- translated Datomic’s Datalog queries to SQLite queries.

The main idea was to reduce the unknown performance of Datomic’s Datalog queries to the better known

performance of SQLite’s SQL queries. The Datomish prototype convinced us that the SQLite

translation could be done efficiently and yield performant queries against the working-sets we

expect to witness in Firefox in the wild. It was flexible in the ways we wanted, and early

experience suggested that the store was performant enough to back the Tofino browser and related

experiments. However, the Datomish prototype was not fit for greater purposes in three regards:

- the transpiled JavaScript could not be reasonably shipped in a product with Firefox’s audience;

- the ClojureScript prototype suffered from emergent memory leaks due to subtle bugs with our use of

ClojureScript’s persistent data structures and communicating sequential processes implementation,

and exposed impedance mismatches between JavaScript and ClojureScript; and

- we felt that ClojureScript and JavaScript were not the right technologies to back data storage for

Android or iOS.

The end of Tofino [December 2016]

At this point, the Tofino product experiment was stopped. None of the UX experiments and prototypes

were deemed worthy of future investment. The people working on Tofino joined the people working on

Datomish (me and Richard) to prototype a Datomic-alike written in Rust. We hoped to:

- ship in Firefox for Desktop;

- be able to ship on Android and iOS;

- improve on the performance and robustness of the Clojure{Script} implementation.

In response, the newly enlarged team rapidly stood up a Rust version of Datomish, which we named

Project Mentat.

Redirection [April 2017]

Throughout Q1 2016, we focused on implementing the core features of Project Mentat. However, senior

management redirected effort away from "ship in Firefox for Desktop" and toward two alternate goals:

- prototype the new Firefox UI (Photon), using lessons learned from the existing Firefox UI

(Australis) implementation expressed using React in Tofino;

- re-focus on Mentat as a component of new product experiences, in the same way that Tofino had

focused on new product experiences.

In response, we re-focused the people who had been working on Tofino onto the "Photino" Photon UI

prototype .

Crucially, we continued to build Mentat as a store that:

- focused on flexibility and schema evolution; and

- could meet performance requirements through suitable abstractions rather than manual tinkering; and

- would support a robust Sync solution (that was not necessarily Firefox Sync);

while additionally proposing an architectural split between the user interface, browser data, and

web rendering platform that we believe Mozilla should invest in across all its browser offerings.

Status [May 2017]

Where does the Project Mentat codebase stand? As of May 2017 we’ve implemented basic transacting:

- assertion and retraction with :db/add and :db/retract

- map notation like {:db/id ... :some/attribute :some/value ...}

- foundational data types like :db.type/long, :db.type/string, :db.type/keyword, etc

- cardinality constraints with :db.cardinality/one and :db.cardinality/many

- custom identifiers with :db/ident

- schema mutation equivalent to Datomic’s :db.install/attribute

- temporary identifier resolution like Datomic`s {:db/id "tempid"}

- :db.unique/identity and upserts

and basic querying:

- accepting a large subset of Datomic’s Datalog query language

- non-trivial joining with :or and :or-join

- negation with :not and :not-join

- interpolating input values with :in

- projecting scalar, vector, tuple, and relation results

- ordering and limiting result sets

- some non-trivial query pruning and type-aware optimization

The Rust implementation is not yet as full featured as the Clojure{Script} prototype:

- no support for aggregates like (count), (max), and (min) in queries;

- no fulltext search with :db/fulltext true attributes in queries;

- no schema registration and migration layer on top of the basic store;

- no transactor loop and transaction listeners.

But we think what is implemented is robust and has a clear path to production. We anticipate it

will take roughly 3 months to land Mentat into Firefox for Desktop and to back a simple store like

logins or form history using the new technology. The most significant work will be managing the

application life cycles and locking and concurrency; the foundational work for applying transactions

and querying the store is essentially complete.

Review [May 2017]

Mentat is currently running an architectural review gauntlet to decide whether Mozilla will invest

further or cancel the project. The architecture review has focused primarily on whether Mentat can

address the wide-ranging short-term storage architectural failings that hold Firefox for Desktop

down. Unfortunately, we didn’t expect to need to do so at this time — indeed, we were told not to!

— so we’re fighting to justify our approach as I type.

We still believe that Mentat can solve some of Firefox’s storage problems right now and can evolve

to solve most of the others, but we have not engaged directly with most of the concrete problems the

architecture review process foresee. I hope we’ll get time to do so, but if we don’t — this blog

post can serve as a Project Mentat pre-mortem.

Conclusion

I hope this narrative explains the forces that shaped the arc we followed to get to Mentat, and that

not all of the problems we faced, solutions we explored, and artifacts we created are lost immediately.

Thanks to Richard Newman (@rnewman) for being the animating force behind Mentat and this work.

Thanks to Joe Walker for being a great manager to work with and for. And thanks to Mark Mayo and

the Tofino team for trying a new thing, regardless of the outcome. Innovator’s dilemma is a real thing.

Many thanks to Joe Walker, who provided initial feedback on this blog post before it was intended

for public consumption; and to early readers and reviewers Richard Newman, Grisha Kruglov, Ralph

Giles, and Francois Marier, who provided valuable feedback and compelled me to expand or rewrite

many sections.

Discussion is best conducted on IRC: I’m nalexander in irc.mozilla.org/#mentat and on Slack

(Mozilla Corporation only), and I’m @ncalexander on Twitter.

Changes

- Wed 31 May 2017: Incorporated suggestions from Francois Marier.

- Mon 29 May 2017: Incorporated suggestions from Grisha Kruglov, Ralph Giles, and Richard Newman.

- Wed 24 May 2017: Initial version.

May 31, 2017 11:00 PM

May 27, 2017

Ever since the original iPhone came out, I’ve had several ideas about how they managed to achieve such fluidity with relatively mediocre hardware. I mean, it was good at the time, but Android still struggles on hardware that makes that look like a 486… It’s absolutely my fault that none of these have been implemented in any open-source framework I’m aware of, so instead of sitting on these ideas and trotting them out at the pub every few months as we reminisce over what could have been, I’m writing about them here. I’m hoping that either someone takes them and runs with them, or that they get thoroughly debunked and I’m made to look like an idiot. The third option is of course that they’re ignored, which I think would be a shame, but given I’ve not managed to get the opportunity to implement them over the last decade, that would hardly be surprising. I feel I should clarify that these aren’t all my ideas, but include a mix of observation of and conjecture about contemporary software. This somewhat follows on from the post I made 6 years ago(!) So let’s begin.

1. No main-thread UI

The UI should always be able to start drawing when necessary. As careful as you may be, it’s practically impossible to write software that will remain perfectly fluid when the UI can be blocked by arbitrary processing. This seems like an obvious one to me, but I suppose the problem is that legacy makes it very difficult to adopt this at a later date. That said, difficult but not impossible. All the major web browsers have adopted this policy, with caveats here and there. The trick is to switch from the idea of ‘painting’ to the idea of ‘assembling’ and then using a compositor to do the painting. Easier said than done of course, most frameworks include the ability to extend painting in a way that would make it impossible to switch to a different thread without breaking things. But as long as it’s possible to block UI, it will inevitably happen.

2. Contextually-aware compositor

This follows on from the first point; what’s the use of having non-blocking UI if it can’t respond? Input needs to be handled away from the main thread also, and the compositor (or whatever you want to call the thread that is handling painting) needs to have enough context available that the first response to user input doesn’t need to travel to the main thread. Things like hover states, active states, animations, pinch-to-zoom and scrolling all need to be initiated without interaction on the main thread. Of course, main thread interaction will likely eventually be required to update the view, but that initial response needs to be able to happen without it. This is another seemingly obvious one – how can you guarantee a response rate unless you have a thread dedicated to responding within that time? Most browsers are doing this, but not going far enough in my opinion. Scrolling and zooming are often catered for, but not hover/active states, or initialising animations (note; initialising animations. Once they’ve been initialised, they are indeed run on the compositor, usually).

3. Memory bandwidth budget

This is one of the less obvious ideas and something I’ve really wanted to have a go at implementing, but never had the opportunity. A problem I saw a lot while working on the platform for both Firefox for Android and FirefoxOS is that given the work-load of a web browser (which is not entirely dissimilar to the work-load of any information-heavy UI), it was very easy to saturate memory bandwidth. And once you saturate memory bandwidth, you end up having to block somewhere, and painting gets delayed. We’re assuming UI updates are asynchronous (because of course – otherwise we’re blocking on the main thread). I suggest that it’s worth tracking frame time, and only allowing large asynchronous transfers (e.g. texture upload, scaling, format transforms) to take a certain amount of time. After that time has expired, it should wait on the next frame to be composited before resuming (assuming there is a composite scheduled). If the composited frame was delayed to the point that it skipped a frame compared to the last unladen composite, the amount of time dedicated to transfers should be reduced, or the transfer should be delayed until some arbitrary time (i.e. it should only be considered ok to skip a frame every X ms).

It’s interesting that you can see something very similar to this happening in early versions of iOS (I don’t know if it still happens or not) – when scrolling long lists with images that load in dynamically, none of the images will load while the list is animating. The user response was paramount, to the point that it was considered more important to present consistent response than it was to present complete UI. This priority, I think, is a lot of the reason the iPhone feels ‘magic’ and Android phones felt like junk up until around 4.0 (where it’s better, but still not as good as iOS).

4. Level-of-detail

This is something that I did get to partially implement while working on Firefox for Android, though I didn’t do such a great job of it so its current implementation is heavily compromised from how I wanted it to work. This is another idea stolen from game development. There will be times, during certain interactions, where processing time will be necessarily limited. Quite often though, during these times, a user’s view of the UI will be compromised in some fashion. It’s important to understand that you don’t always need to present the full-detail view of a UI. In Firefox for Android, this took the form that when scrolling fast enough that rendering couldn’t keep up, we would render at half the resolution. This let us render more, and faster, giving the impression of a consistent UI even when the hardware wasn’t quite capable of it. I notice Microsoft doing similar things since Windows 8; notice how the quality of image scaling reduces markedly while scrolling or animations are in progress. This idea is very implementation-specific. What can be dropped and what you want to drop will differ between platforms, form-factors, hardware, etc. Generally though, some things you can consider dropping: Sub-pixel anti-aliasing, high-quality image scaling, render resolution, colour-depth, animations. You may also want to consider showing partial UI if you know that it will very quickly be updated. The Android web-browser during the Honeycomb years did this, and I attempted (with limited success, because it’s hard…) to do this with Firefox for Android many years ago.

Pitfalls

I think it’s easy to read ideas like this and think it boils down to “do everything asynchronously”. Unfortunately, if you take a naïve approach to that, you just end up with something that can be inexplicably slow sometimes and the only way to fix it is via profiling and micro-optimisations. It’s very hard to guarantee a consistent experience if you don’t manage when things happen. Yes, do everything asynchronously, but make sure you do your book-keeping and you manage when it’s done. It’s not only about splitting work up, it’s about making sure it’s done when it’s smart to do so.

You also need to be careful about how you measure these improvements, and to be aware that sometimes results in synthetic tests will even correlate to the opposite of the experience you want. A great example of this, in my opinion, is page-load speed on desktop browsers. All the major desktop browsers concentrate on prioritising the I/O and computation required to get the page to 100%. For heavy desktop sites, however, this means the browser is often very clunky to use while pages are loading (yes, even with out-of-process tabs – see the point about bandwidth above). I highlight this specifically on desktop, because you’re quite likely to not only be browsing much heavier sites that trigger this behaviour, but also to have multiple tabs open. So as soon as you load a couple of heavy sites, your entire browsing experience is compromised. I wouldn’t mind the site taking a little longer to load if it didn’t make the whole browser chug while doing so.

Don’t lose sight of your goals. Don’t compromise. Things might take longer to complete, deadlines might be missed… But polish can’t be overrated. Polish is what people feel and what they remember, and the lack of it can have a devastating effect on someone’s perception. It’s not always conscious or obvious either, even when you’re the developer. Ask yourself “Am I fully satisfied with this” before marking something as complete. You might still be able to ship if the answer is “No”, but make sure you don’t lose sight of that and make sure it gets the priority it deserves.

One last point I’ll make; I think to really execute on all of this, it requires buy-in from everyone. Not just engineers, not just engineers and managers, but visual designers, user experience, leadership… Everyone. It’s too easy to do a job that’s good enough and it’s too much responsibility to put it all on one person’s shoulders. You really need to be on the ball to produce the kind of software that Apple does almost routinely, but as much as they’d say otherwise, it isn’t magic.

May 27, 2017 12:00 PM

February 23, 2017

Keeping up my yearly blogging cadence, it’s about time I wrote to let people know what I’ve been up to for the last year or so at Mozilla. People keeping up would have heard of the sad news regarding the Connected Devices team here. While I’m sad for my colleagues and quite disappointed in how this transition period has been handled as a whole, thankfully this hasn’t adversely affected the Vaani project. We recently moved to the Emerging Technologies team and have refocused on the technical side of things, a side that I think most would agree is far more interesting, and also far more suited to Mozilla and our core competence.

Project DeepSpeech

So, out with Project Vaani, and in with Project DeepSpeech (name will likely change…) – Project DeepSpeech is a machine learning speech-to-text engine based on the Baidu Deep Speech research paper. We use a particular layer configuration and initial parameters to train a neural network to translate from processed audio data to English text. You can see roughly how we’re progressing with that here. We’re aiming for a 10% Word Error Rate (WER) on English speech at the moment.

You may ask, why bother? Google and others provide state-of-the-art speech-to-text in multiple languages, and in many cases you can use it for free. There are multiple problems with existing solutions, however. First and foremost, most are not open-source/free software (at least none that could rival the error rate of Google). Secondly, you cannot use these solutions offline. Third, you cannot use these solutions for free in a commercial product. The reason a viable free software alternative hasn’t arisen is mostly down to the cost and restrictions around training data. This makes the project a great fit for Mozilla as not only can we use some of our resources to overcome those costs, but we can also use the power of our community and our expertise in open source to provide access to training data that can be used openly. We’re tackling this issue from multiple sides, some of which you should start hearing about Real Soon Now™.

The whole team has made contributions to the main code. In particular, I’ve been concentrating on exporting our models and writing clients so that the trained model can be used in a generic fashion. This lets us test and demo the project more easily, and also provides a lower barrier for entry for people that want to try out the project and perhaps make contributions. One of the great advantages of using TensorFlow is how relatively easy it makes it to both understand and change the make-up of the network. On the other hand, one of the great disadvantages of TensorFlow is that it’s an absolute beast to build and integrates very poorly with other open-source software projects. I’ve been trying to overcome this by writing straight-forward documentation, and hopefully in the future we’ll be able to distribute binaries and trained models for multiple platforms.

Getting Involved

We’re still at a fairly early stage at the moment, which means there are many ways to get involved if you feel so inclined. The first thing to do, in any case, is to just check out the project and get it working. There are instructions provided in READMEs to get it going, and fairly extensive instructions on the TensorFlow site on installing TensorFlow. It can take a while to install all the dependencies correctly, but at least you only have to do it once! Once you have it installed, there are a number of scripts for training different models. You’ll need a powerful GPU(s) with CUDA support (think GTX 1080 or Titan X), a lot of disk space and a lot of time to train with the larger datasets. You can, however, limit the number of samples, or use the single-sample dataset (LDC93S1) to test simple code changes or behaviour.

One of the fairly intractable problems about machine learning speech recognition (and machine learning in general) is that you need lots of CPU/GPU time to do training. This becomes a problem when there are so many initial variables to tweak that can have dramatic effects on the outcome. If you have the resources, this is an area that you can very easily help with. What kind of results do you get when you tweak dropout slightly? Or layer sizes? Or distributions? What about when you add or remove layers? We have fairly powerful hardware at our disposal, and we still don’t have conclusive results about the affects of many of the initial variables. Any testing is appreciated! The Deep Speech 2 paper is a great place to start for ideas if you’re already experienced in this field. Note that we already have a work-in-progress branch implementing some of these ideas.

Let’s say you don’t have those resources (and very few do), what else can you do? Well, you can still test changes on the LDC93S1 dataset, which consists of a single sample. You won’t be able to effectively tweak initial parameters (as unsurprisingly, a dataset of a single sample does not represent the behaviour of a dataset with many thousands of samples), but you will be able to test optimisations. For example, we’re experimenting with model quantisation, which will likely be one of multiple optimisations necessary to make trained models usable on mobile platforms. It doesn’t particularly matter how effective the model is, as long as it produces consistent results before and after quantisation. Any optimisation that can be made to reduce the size or the processor requirement of training and using the model is very valuable. Even small optimisations can save lots of time when you start talking about days worth of training.

Our clients are also in a fairly early state, and this is another place where contribution doesn’t require expensive hardware. We have two clients at the moment. One written in Python that takes advantage of TensorFlow serving, and a second that uses TensorFlow’s native C++ API. This second client is the beginnings of what we hope to be able to run on embedded hardware, but it’s very early days right now.

And Finally

Imagine a future where state-of-the-art speech-to-text is available, for free (in cost and liberty), on even low-powered devices. It’s already looking like speech is going to be the next frontier of human-computer interaction, and currently it’s a space completely tied up by entities like Google, Amazon, Microsoft and IBM. Putting this power into everyone’s hands could be hugely transformative, and it’s great to be working towards this goal, even in a relatively modest capacity. This is the vision, and I look forward to helping make it a reality.

February 23, 2017 04:55 PM

October 31, 2016

I wrote earlier about my initial experience with triaging frequent intermittent test failures. I was happy to find that most of the most-frequent test failures were under active investigation, but that also meant that finding important bugs in need of triage was a frustrating and time consuming process.

Thankfully, :ekyle provided me with a script to identify “neglected oranges”: Frequent intermittent test failure bugs with no recent comments. The neglected oranges script provides search results not unlike the default search on Orange Factor, but filters out bugs with recent comments from non-robots. It also shows the bug age and how long it has been since the last comment:

This has provided a treasure trove of bugs for triage.

So, now that I can find bugs for frequent intermittent failures that don’t have anyone actively working on them, can I instigate action? Does this type of triage lead to bug resolution and a reduction in Orange Factor (average number of failures per push)? Here’s one way of looking at it: If I look at the bugs I’ve recently triaged and look at the time those bugs were open before I commented on them, I find that, on average, those bugs were open for 65 days before my triage comment. Typically I tried to find someone familiar with the bug and pointed out that it was a frequently failing test; sometimes I offered some insight, or suggested some action (“this is a timeout in a long-running test; if it cannot be optimized or split up, requestLongerTimeout() should avoid the timeout”). On average, those bugs were resolved within 3 days of my triage comment. Wow!

I offer this evidence that triage of neglected oranges makes a difference, but also caution not to expect that much of a difference over time: I’ve chosen bugs that were open for months and with continued triage, we may quickly eliminate these long-neglected bugs (let’s hope!). I’ve also likely chosen “easy” bugs – bugs with an obvious, or at least apparent, resolution. There will also be intractable bugs, surely, and bugs without any apparent owner, or where interested parties cannot agree on a solution.

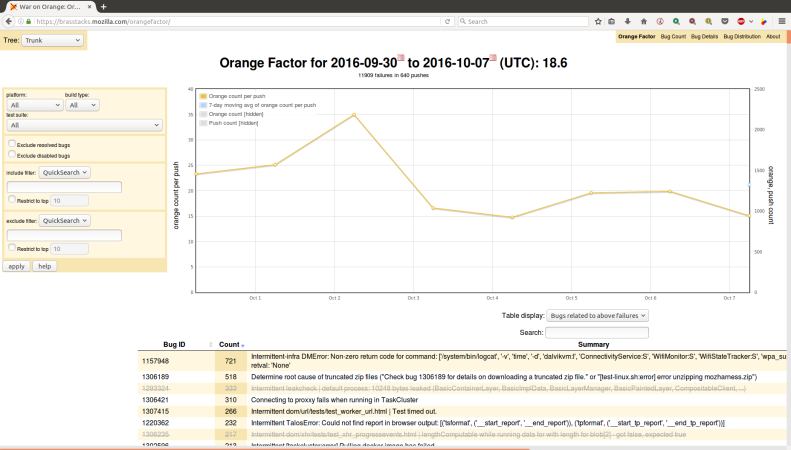

It is similarly difficult to draw conclusions from Orange Factor failure rates, but let’s look at those anyway, roughly for the time period I have been triaging:

That’s encouraging, isn’t it? I don’t know how much of that improvement was instigated by my triage comments, but I like to think I have contributed to the improvement, and that this type of action can continue to drive down failure rates. I’ll keep spending at least a few hours each week on neglected oranges, and see how that goes for the next couple of months. Can we bring Orange Factor under 10? Under 5?

October 31, 2016 08:20 PM

October 28, 2016

Many of our frequent intermittent test failures are timeouts. There are a lot of ways that a test – or a test job – can time out. Some popular bug titles demonstrate the range of failure messages:

We have tried re-wording some of these messages with the aim of clarifying the cause of the timeout and possible remedies, but I still see lots of confusion in bugs. In some cases, I think a complete explanation is much more involved than we can hope to express in an error message. I think we should write up a wiki page or MDN article with detailed explanations of messages like this, and point to that page from error messages in the test log.

One of the first things I do when I see a test failure due to timeout is look for a successful run of the same test on the same platform, and then compare the timing between the success and failure cases. If a test takes 4 seconds to run in the success case but times out after 45 seconds, perhaps there is an intermittent hang; but if the test takes 40 seconds to run successfully and intermittently times out after 45 seconds, it’s probably just a long running test with normal variation in run time.

This suggests some nice-to-have tools:

- push a new test to try, get a report of how long your test runs on each platform, perhaps with a warning if run-time approaches known time-outs, or perhaps some arbitrary threshold;

- same for longest duration without output (avoid “no output timeout”);

- use custom code or a special test harness mode to identify existing long-running tests, for proactive follow-up to prevent timeouts in the future.

October 28, 2016 06:39 PM

October 17, 2016

Recently, I have been trying to spend a little time each day looking over the most frequent intermittent test failures in search of neglected bugs. I use Orange Factor to identify the most frequent failures, then scan the associated bugs in bugzilla to see if there is someone actively working on the bug.

I have had some encouraging successes. For example, in bug 1307388, I found a frequent intermittent with no one assigned and no sign of activity. The test had started failing recently – a few days earlier – with no sign of failures before that. A quick check of the mercurial logs showed that the test had been modified the day that it started failing, and a needinfo of the patch author led to immediate action.

In bug 1244707, the bug had been triaged several months ago and assigned to backlog, but the failure frequency had since increased dramatically. Pinging someone familiar with the test quickly led to discussion and resolution.

My experience in each of these cases was really rewarding: It took me just a few minutes to review the bug and bring it to the attention of someone who was interested and understood the failure.

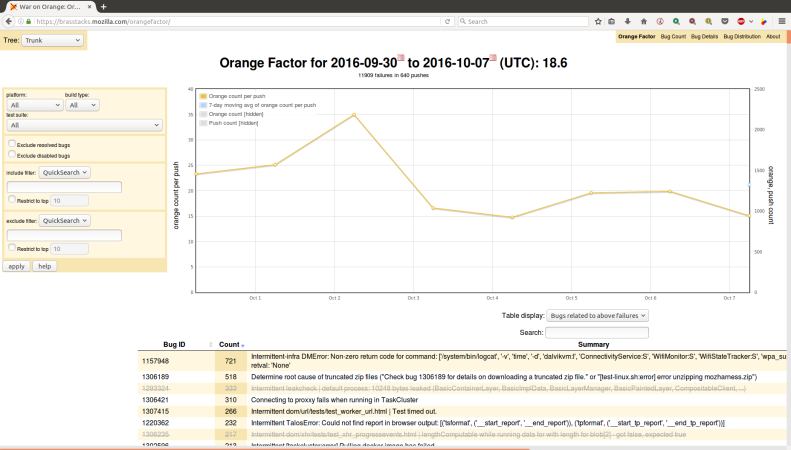

Finding neglected bugs is more onerous. Orange Factor can be used to identify frequent test failures; the default view on https://brasstacks.mozilla.com/orangefactor/ provides a list, ordered by frequency, but most of those are not neglected — some one is already working on them and they just need time to investigate and land a fix. I think the sheriffs do a good job of finding owners for frequent intermittents, so it seems like 90% of the top intermittents have owners, and they are usually actively working on resolving those issues. I don’t think there’s any way to see that activity on Orange Factor:

So I end up opening lots of bugs each day before I find one that “needs help”. Broadly speaking, I’m looking for a search for bugs matching something like:

- intermittent test failure

- fails frequently (OrangeFactor Robot’s daily comment?)

- no recent (last 7 days?) human-generated (not OrangeFactor Robot) bug comments

OrangeFactor does a good job of identifying the frequent failures, but I don’t think it has any data on bug activity…and this notion of bug activity is hazy anyway. Ping me if you have a better intermittent orange triage procedure, or thoughts on how to do this more efficiently.

** Update – I’ve been getting lots of ideas from folks on irc for better triaging:

ryanvm

- look to aurora/beta for bugs that have been around for longer

- would be nice if a dashboard would show trends for a bug (now happening more frequently, etc) – like socorro

- bugzilla data fed to presto, so marrying it to treeherder with redash may be possible (mdoglio may know more)

wlach

- might be able to use redash for change detection/trends once treeherder’s db is hooked up to it

ekyle

- there’s an OrangeFactorv2 planned

- the bugzilla es cluster has all bug data in easy to query format

October 17, 2016 08:11 PM

October 13, 2016

Normal releases are consistent and predictable. Scheduled releases benefit developers, testers, support and PR. Unpredictable releases can cause communication problems, stress and fatigue. Those can lead to poor software quality and developer turn-over.

Sometimes we need to deal with unexpected issues that can’t wait for a normal release. Some examples include:

- High volume crashes

- Broken functionality

- Security issues

- Special date-based features

Anyone should be able to suggest an off-cycle release, so make sure there’s a straightforward, simple process for doing it. Identify that a special release is really necessary. Maybe the issue can wait for the next normal release. Consider using an approval process to decide if the release is warranted. An approval process creates a small hurdle that forces some justification. An off-cycle release is not cheap and has potential to derail the normal release process. Don’t put the normal release cycle at risk.

Some things to keep in mind:

- Clearly identify the need. If you can’t, you probably don’t need the release.

- Limit the scope of work to just what needs to be done for the issue. Be laser focused.

- Make sure the work can be completed within the shortened cycle. Otherwise, just let the work happen in the normal release flow.

- Choose an owner to drive the release and a set of stakeholders that need to track the release.

- Triage frequently to make sure the short cycle stays on track. Over-communicate.

- Test and verify the code changes. By limiting the scope, you should also be limiting the amount of required testing.

Be ready for the unexpected. Get really good at it. The best releases are boring releases.

October 13, 2016 02:41 PM

October 07, 2016

Our automated tests seem to fail a lot. Instead of a sea of green, a typical good push often looks more like:

I’ve been thinking about ways that we can improve on that: Ways that we can reduce those pesky intermittent oranges.

Here’s one idea: Be more aggressive about disabling (skipping) tests that fail intermittently.

For today anyway, let’s put aside those tests that fail infrequently. If a test fails only rarely, there’s less to be gained by skipping it. It may also be harder to reproduce such failures, and harder to fix them and get them running again.

Instead, let’s concentrate (for now) on frequent, persistent test failures. There are lots of them:

Notice that the most frequent intermittent failure for this one-week period is bug 1157948, which failed 721 times (well, it was reported/starred 721 times — it probably failed more than that!). Guess what happened the week before that? Yeah, another 700 or so oranges. And the week before that and … This is definitely a persistent, frequent intermittent failure.

I am actually intimately familiar with bug 1157948. I’ve worked hard to resolve it, and lots of other people have too, and I’m hopeful that a fix is landing for it right now. Still, it took over 3 months to fix this. What did we gain by running the affected tests for those 3 months? Was it worth the 10000+ failures that sheriffs and developers saw, read, diagnosed, and starred?

Bug 1157948 affected all taskcluster-initiated Android tests, so skipping the affected tests would have meant losing a lot of coverage. But it is not difficult to find other bugs with over 100 failures per week that affect just one test (like bug 1305601, just to point out an example). It would be easy to disable (skip-if annotate) this test while we work on it, and wouldn’t that be better? It won’t be fixed overnight, but it will continue to fail overnight — and there’s a cost to that.

There’s a trade-off here for sure. A skipped test means less coverage. If another change causes a spontaneous fix to this test, we won’t notice the change if it is skipped. And we won’t notice a change in the frequency of failures. How important are these considerations, and are they important enough that we can live with seeing, reporting, and tracking all these test failures?

I’m not yet sure about the particulars of when and how to skip intermittent failures, but it feels like we would profit by being more aggressive about skipping troublesome tests, particularly those that fail frequently and persistently.

October 07, 2016 11:16 PM

September 30, 2016

Highlights:

- Recent outstanding improvements in APK size, memory use, and startup time, all due to :esawin’s efforts in bug 1291424.

APK Size

You can see the size of every build on treeherder using Perfherder.

Here’s how the APK size changed over the quarter, for mozilla-central Android 4.0 API15+ opt builds:

As seen in the past, the APK size seems to gradually increase over time. But this quarter there is a pleasant surprise, with a recent very large improvement. That is :esawin’s change from bug 1291424. Nice!

Memory

We track some memory metrics using test_awsy_lite.

Again, there is a tremendous improvement with bug 1291424. Thankyou :esawin!

Autophone-Talos

This section tracks Perfherder graphs for mozilla-central builds of Firefox for Android, for Talos tests run on Autophone, on android-6-0-armv8-api15. The test names shown are those used on treeherder. See https://wiki.mozilla.org/Buildbot/Talos for background on Talos.

tsvgx

An svg-only number that measures SVG rendering performance. About half of the tests are animations or iterations of rendering. This ASAP test (tsvgx) iterates in unlimited frame-rate mode thus reflecting the maximum rendering throughput of each test. The reported value is the page load time, or, for animations/iterations – overall duration the sequence/animation took to complete. Lower values are better.

tp4m

Generic page load test. Lower values are better.

No significant improvements or regressions noted for tsvgx or tp4m.

Autophone

Throbber Start / Throbber Stop

Browser startup performance is measured on real phones (a variety of popular devices).

Here’s a quick summary for the local blank page test on various devices:

Again, there is an excellent performance improvement with bug 1291424. Yahoo!

See bug 953342 to track autophone throbber regressions (none this quarter).

September 30, 2016 07:57 PM

September 14, 2016

We all want to ship as fast as possible, while making sure we can control the quality of our product. Continuous deployment means we can ship at any time, right? Well, we still need to balance the unstable and stable parts of the codebase.

Web Deploys vs Application Deploys

The ability to control changes in your stable codebase is usually the limiting factor in how quickly and easily you can ship your product to people. For example, web products can ship frequently because it’s somewhat easy to control the state of the product people are using. When something is updated on the website, users get the update when loading the content or refreshing the page. With mobile applications, it can be harder to control the version of the product people are using. After pushing an update to the store, people need to update the application on their devices. This takes time and it’s disruptive. It’s typical for several versions of a mobile application to be active at any given time.

It’s common for mobile application development to use time-based deployment windows, such as 2 or 4 weeks. Every few weeks, the unstable codebase is promoted to the stable codebase and tasks (features and bug fixes) which are deemed stable are made ready to deploy. Getting ready to deploy could mean running a short Beta, to test the release candidate with a larger, more varied, test group.

It’s important to remember, these deployment windows are not development sprints! They are merely opportunities to deploy stable code. Some features or bug fixes could take many weeks to complete. Once complete, the code can be deployed at the next window.

Tracking the Tasks

Just because you use 2 week deployment windows doesn’t mean you can really ship a quality product every 2 weeks. The deployment window is an artificial framework we create to add some structure to the process. At the core, we need to be able to track the tasks. What is a task? Let’s start with something that’s easy to visualize: a feature.

What work goes into getting a feature shipped?

- Planning: Define and scope the work.

- Design: Design the UI and experience.

- Coding: Do the implementation. Iterate with designers & product managers.

- Reviewing: Examine & run the code, looking for problems. Code is ready to land after a successful review. Otherwise, it goes back to coding to fix issues.

- Testing: Test that the feature is working correctly and nothing broke in the process. Defects might require sending the work back to development.

- Push to Stable: Once implemented, tested and verified, the code can be moved to the stable codebase.

In the old days, this was a waterfall approach. These days, we can use iterative, overlapping processes. A flow might crudely look like this:

Each of these steps takes a non-zero amount of time. Some have to be repeated. The goal is to create a feature that has the desired behavior and at a known level of quality. Note that landing the code is not the final step. The work can only be called complete when it’s been verified as stable enough to ship.

Bug fixes are similar to features. The flow might look like this:

Imagine you have many of these flows happening at the same time. Ongoing work happens on the unstable codebase. As work is completed, tested and verified at an expectable level of quality, it can be moved to the stable codebase. All work happens on the unstable codebase. Try very hard to keep work on the stable codebase to a minimum – usually disabling/enabling code or backing out unstable code.

Crash Landings

One practice I’ve seen happen on development teams is attempting to crash land code right before a deployment window. This is bad for a few reasons:

- It forces many code reviews to happen simultaneously across the team, leading to delays since code review is an iterative cycle.

- It forces large amounts of code to be merged during a short time period, likely leading to merge conflicts – leading to more delays.

- It forces a lot of testing to happen at the same time, leading to backlogs and delays. Especially since testing, fixing and verifying is an iterative cycle.

The end result is anti-climatic for everyone: code landed at a deployment window is almost never shipped in the window. In fact, the delays caused by crash landing lead to a lot of code missing the deployment window.

Smooth Landings

A different approach is to spread out the code landings. Allow code reviews and testing/fixing cycles to happen in a more balanced manner. More code is verified as stable and can ship in the deployment window. Code that is not stable is disabled via build-time or runtime flags, or in extreme cases, backout out of the stable codebase.

This balanced approach also reduces the stress that accompanies rushing code reviews and testing. The process becomes more predictable and even enjoyable. Teams thrive in healthy environments.

Once you get comfortable with deployment windows and sprints being very different things, you could even start getting more creative with deployments. Could you deploy weekly? I think it’s possible, but the limiting factor becomes your ability to create stable builds, test and verify those builds and submit those builds to the store. Yes, you still need to test the release candidates and react to any unexpected outcomes from the testing. Testing the release candidates with a larger group (Beta testing) will usually turn up issues not found in other testing. At larger scales, many things thought to be only hypothetical become reality and might need to be addressed. Allowing for this type of beta testing improves quality, but may limit how short a deployment window can be.

Remember, it’s difficult to undo or remove an unexpected issue from a mobile application user population. Users are just stuck with the problem until they get around to updating to a fixed version.

I’ve seen some companies use short deployment window techniques for internal test releases, so it’s certainly possible. Automation has to play a key role, as does tracking and triaging the bugs. Risk assessment is a big part of shipping software. Know your risks, ship your software.

September 14, 2016 07:35 PM

September 12, 2016

July 18, 2016

The web as we know it basically runs on advertising. Which is not really great, for a variety of reasons. But charging people outright for content doesn't work that great either. How about bitcoin mining instead?

Webpages can already run arbitary computation on your computer, so instead of funding themselves through ads, they could instead include a script that does some mining client-side and submits the results back to their server. Instead of paying with dollars and cents you're effectively paying with electricity and compute cycles. Seems a lot more palatable to me. What do you think?

July 18, 2016 02:49 AM

July 04, 2016

Highlights:

- gradual increases in APK size and memory use

- not much change in tsvgx or tp4m

- autophone throbber data available in perfherder

APK Size

You can see the size of every build on treeherder using Perfherder.

Here’s how the APK size changed over the quarter, for mozilla-central Android 4.0 API15+ opt builds:

APK size generally grew, generally in small increments. Our APK is about 1.3 MB larger today than it was 3 months ago. The largest increase, of about 400 KB around May 4, was caused by and discussed in bug 1260208. The largest decrease, of about 200 KB around April 25, was caused by bug 1266102.

For the same period, libxul.so also generally grew gradually:

Memory

We track some memory metrics using test_awsy_lite.

These memory measurements are fairly steady over the quarter, with a gradual increase over time.

Autophone-Talos

This section tracks Perfherder graphs for mozilla-central builds of Firefox for Android, for Talos tests run on Autophone, on android-6-0-armv8-api15. The test names shown are those used on treeherder. See https://wiki.mozilla.org/Buildbot/Talos for background on Talos.

tsvgx

An svg-only number that measures SVG rendering performance. About half of the tests are animations or iterations of rendering. This ASAP test (tsvgx) iterates in unlimited frame-rate mode thus reflecting the maximum rendering throughput of each test. The reported value is the page load time, or, for animations/iterations – overall duration the sequence/animation took to complete. Lower values are better.

tp4m

Generic page load test. Lower values are better.

No significant improvements or regressions noted for tsvgx or tp4m.

Autophone

Throbber Start / Throbber Stop

Browser startup performance is measured on real phones (a variety of popular devices).

For the first time on this blog, I’ve pulled this graph from Perfherder, rather than phonedash. A wealth of throbber start/throbber stop data is now available in Perfherder. Here’s a quick summary for the local blank page test on various devices:

See bug 953342 to track autophone throbber regressions.

July 04, 2016 11:22 PM

June 01, 2016

I’m currently working on the Vaani project at Mozilla, and part of my work on that allows me to do some exploration around the topic of speech recognition and speech assistants. After looking at some of the commercial offerings available, I thought that if we were going to do some kind of add-on API, we’d be best off aping the Amazon Alexa skills JS API. Amazon Echo appears to be doing quite well and people have written a number of skills with their API. There isn’t really any alternative right now, but I actually happen to think their API is quite well thought out and concise, and maps well to the sort of data structures you need to do reliable speech recognition.

So skipping forward a bit, I decided to prototype with Node.js and some existing open source projects to implement an offline version of the Alexa skills JS API. Today it’s gotten to the point where it’s actually usable (for certain values of usable) and I’ve just spent the last 5 minutes asking it to tell me Knock-Knock jokes, so rather than waste any more time on that, I thought I’d write this about it instead. If you want to try it out, check out this repository and run npm install in the usual way. You’ll need pocketsphinx installed for that to succeed (install sphinxbase and pocketsphinx from github), and you’ll need espeak installed and some skills for it to do anything interesting, so check out the Alexa sample skills and sym-link the ‘samples‘ directory as a directory called ‘skills‘ in your ferris checkout directory. After that, just run the included example file with node and talk to it via your default recording device (hint: say ‘launch wise guy‘).

Hopefully someone else finds this useful – I’ll be using this as a base to prototype further voice experiments, and I’ll likely be extending the Alexa API further in non-standard ways. What was quite neat about all this was just how easy it all was. The Alexa API is extremely well documented, Node.js is also extremely well documented and just as easy to use, and there are tons of libraries (of varying quality…) to do what you need to do. The only real stumbling block was pocketsphinx’s lack of documentation (there’s no documentation at all for the Node bindings and the C API documentation is pretty sparse, to say the least), but thankfully other members of my team are much more familiar with this codebase than I am and I could lean on them for support.

I’m reasonably impressed with the state of lightweight open source voice recognition. This is easily good enough to be useful if you can limit the scope of what you need to recognise, and I find the Alexa API is a great way of doing that. I’d be interested to know how close the internal implementation is to how I’ve gone about it if anyone has that insider knowledge.

June 01, 2016 04:54 PM

May 20, 2016

I have some 2” wooden blinds in my house that I’ve been wanting to motorize. Why? I’m lazy and I thought it would be cool to have.

The best commercial solution for retrofitting existing blinds seems to be Somfy. They have wireless battery-powered systems and fancy-looking remotes. For new motorized blinds, Bali seems to be popular, and they use Somfy for the motorization. There are also some kickstarter things (MOVE, MySmartBlinds), but the last time I looked those didn’t really do what I want. Somfy likely has a good product, but it’s very expensive. It looks like it would cost about $150 per blind, which is just way too much for me. They want $30 just for the plastic wand that holds the batteries (8 x AA). We’re talking about a motor and a wireless controller to tell it what to do. It’s not rocket surgery, so why should it cost $150?

My requirements are:

- Ability to tilt the blinds to one of three positions (up, middle, down) remotely via some wireless interface. I don’t care about raising or lowering the entire blind.

- There must be some API for the wireless interface such that I can automate them myself (close at night, open in morning)

- Tilt multiple blinds at the same time so they look coordinated.

- Be power efficient – one set of batteries should last more than a year.

Somfy satisfies this if I also buy their “Universal RTS Interface” for $233, but that only makes their solution even more expensive. For the 6 blinds I wanted to motorize, it would cost about $1200. No way.

I’ve been meaning to get into microcontrollers for a while now, and I thought this would be the perfect project for me to start. About a year ago I bought a RedBear BLE Nano to play with some Bluetooth stuff, so I started with that. I got a hobby servo and a bunch of other junk (resistors, capacitors, etc) from Sparkfun and began flailing around while I had some time off around Christmas. The Arduino environment on the BLE Nano is a little weird, but I got things cobbled together relatively quickly. The servo was very noisy, and it’s difficult to control the speed, but it worked. Because I wanted to control multiple devices at once, BLE was not a really great option (since AFAIK there is no way to ‘broadcast’ stuff in a way that is power-efficient for the listeners), and I started looking at other options. Eventually I ran across the Moteino.

The Moteino is an Arduino clone paired with a RFM69W wireless radio, operating at either 915Mhz or 433Mhz. It also has a very efficient voltage regulator, making it suitable for battery powered applications. The creator of the board (Felix Rusu) has put in a lot of work to create libraries for the Moteino to make it useful in exactly my type of application, so I gave it a try. The RFM69 library is lovely to work with, and I was sending messages between my two Moteinos in no time. The idea is to have one Moteino connected via USB to a Linux box (I already have a BeagleBone Black) as a base station which will relay commands to the remote devices. I got my servo working again with the Moteino quickly, as most of the code Just Worked.

I started out with a hobby servo because I knew it would be easy to control, but the noise and lack of speed control really bothered me. I needed to try something else. I considered higher quality servos, a gear motor with encoder or limit switches, stepper motors, worm gear motors, etc. I was going to end up building 6 of these things to start with, so cost was definitely a big factor. I ended up settling on the 28BYJ-48 stepper motor because it is extremely cheap (about $2), relatively quiet, and let me control the speed of rotation very precisely. There is a great Arduino library for stepper motors, AccelStepper, which lets you configure acceleration/deceleration, maximum speed, etc. It also has an easy-to-use API for positioning the motor. I found a 5mm x 8mm aluminum motor coupling to connect the motor to the blinds shaft. I then used a zip tie and a piece of rubber to secure the motor to the blinds rail. This doesn’t look very professional, but it’s not something you really see (my blinds have a valance that covers the rail). A better solution involving some kind of bracket would be great, but would increase the cost and require a lot more time. Using the stepper, I was able to smoothly, quietly, and cost-effectively control the blinds.

I then started to look into power consumption. If you don’t do put anything to sleep, the power usage is pretty high. The LowPower library from Felix makes it easy to put the CPU to sleep, which helps a lot. When sleeping, the CPU uses very little power (about 3µA I think), and the radio will wake you up via interrupt if a message arrives. The radio uses roughly 17mA in receive mode, however, so that means we’d only get about a week of battery life if we used a set of high-quality AAs (3000mAh / 17mA = 176h). We need to do a lot better.

The RFM69 has a useful feature called Listen Mode that some folks on the LowPowerLabs forums have figured out how to use. In this mode, you can configure the radio to cycle between sleeping and receiving in order to reduce power consumption. There are a lot of options here, but it was discovered that you only need to be in the RX phase for 256µS in order for a message to be detected. When the radio is asleep it uses about 4µA. So if you sleep for 1s and receive for 256µS, that means your average power consumption for the radio is about 12µA. This is a dramatic improvement, and it means that the device can still respond in roughly one second, which is certainly adequate for my application. Of course, you can always trade even more responsiveness for power efficiency, and people using this method on coin cell batteries certainly do that. There is one user on the forums who has an application with an expected battery life of over 100 years on a single coin cell! I have an extension of the RFM69 library, RFM69_WL, which collected some of the other listen mode code that was floating around and extends it so you can set your own sleep/RX durations.

I’ve measured/calculated my average power consumption to be about 46µA if I run the motor for 12s per day. That comes out to over 7 years of life on a set of 4 AA batteries (Energizer Ultimate Lithium), which is an almost unbelievable number. There are several factors I am not really considering, however, such as RF noise (which wakes the radio causing increased power consumption), so the real life performance might not be very close to this. Still, if I can get 2 years on a set of 4 AAs I’ll be pretty happy.

Usually when you buy a 28BYJ-48 it will include a driver board that has a ULN2003A, some connectors, and a set of LEDs for showing which phase of the stepper is active. This is fine for testing and development, but it was going to be pretty clunky to use this in a final solution. It was time to design my first PCB!

I found out early on that it was pretty difficult to talk to other people about problems with your project without having a schematic, so I made one of those. I started with Fritzing, but moved to EAGLE when it was time to do the PCB. It seemed to be the standard thing to use, and it was free. EAGLE has a pretty steep learning curve, but some tutorials from Sparkfun helped a lot. I also got some help from the folks on the LowPowerLabs forums (TomWS, perky), who I suspect do this kind of thing for a living. You can get the EAGLE schematic and board design here.

View post on imgur.com

View post on imgur.com

I ordered my first batch of boards from Seeed Studio, as well as a bunch of supporting components from Digikey. The PCB orders typically take a little over two weeks, which is quite a bit more waiting than I’m accustomed to. I was pretty excited when they arrived, and started checking things out. I soon realized I had made a mistake. The component I had in my design for the motor connector (which is a JST-XH 5-pin) was the wrong pitch and size, so the socket I had didn’t fit. Whoops. The hardware world does not play well with my “just try some stuff” mentality from working with software. I found an EAGLE library for the JST-XH connectors, used the correct part, and ordered another batch of PCBs. This time I actually printed out my board on paper to make sure everything matched up. I had run across PCBShopper while waiting for my first batch of boards, so I decided to use a different fabricator this time. I chose Maker Studio for the second order, since I could pay about the same amount and get red boards instead of green. Another two weeks went by, and finally last week I received the boards. I assembled one last weekend using my fancy (and cheap!) new soldering station. It didn’t work! Shit! The Moteino was working fine, but the motor wasn’t moving. Something with the motor driver or connection was hosed. After probing around for a pretty long time, I finally figured out that the socket was installed backwards. It seems the pins in the EAGLE part I found were reversed. Ugh. With a lot of hassle, I was able to unsolder the connector from the board and reverse it. The silkscreen outline doesn’t match up, but whatever. It works now, which was a big relief.

View post on imgur.com

I thought about putting the board in some kind of plastic enclosure, but it was hard to find anything small enough to fit inside the rail while also being tall enough to accomodate the Moteino on headers. I’m planning to just use some extra-wide heat shrink to protect the whole thing instead, but haven’t done that yet.

Below are some photos and videos, as well as the entire list of parts I’ve used and their prices. Each device costs about $40, which is a pretty big improvement over the commercial options (except maybe the kickstarter stuff). Also important is that there are no wires or electronics visible, which was critical for the Wife Acceptance Factor (and my own, honestly).

I’m sure a real EE will look at this and think “pfft, amateur!”. And that’s fine. My goals were to learn and have fun, and they were definitely accomplished. If I also produced something usable, that’s a bonus.

View post on imgur.com

View post on imgur.com

View post on imgur.com

Bill of Materials

In order to talk to the devices from a host computer, you'll also need a Moteino USB ($27). To program the non-USB Moteinos you'll need a FTDI adapter. LowPowerLabs sells one of those for $15, but you may be able to find a better deal elsewhere.

May 20, 2016 10:00 AM

April 18, 2016

When typing on a laptop keyboard, I find that my posture tends to get very closed and hunched. To fix this I resurrected an old low-tech solution I had for this problem: using two keyboards. Simply plug in an external USB keyboard, and use one keyboard for each hand. It's like a split keyboard, but better, because you can position it wherever you want to get a posture that's comfortable for you.

I used to do this on a Windows machine back when I was working at RIM and it worked great. Recently I tried to do it on my Mac laptop, but ran into the problem where the modifier state from one keyboard didn't apply to the other keyboard. So holding shift on one keyboard and T on the other wouldn't produce an uppercase T. This was quite annoying, and it seems to be an OS-level thing. After some googling I found Karabiner which solves this problem. Well, really it appears to be a more general keyboard customization tool, but the default configuration also combines keys across keyboards which is exactly what I wanted. \o/

Of course, changing your posture won't magically fix everything - moving around regularly is still the best way to go, but for me personally, this helps a bit :)

April 18, 2016 02:32 PM

April 06, 2016

My last post talks about the initial work to create a real user analytics system based on the UI Telemetry event data collected in Firefox on Mobile. I’m happy to report that we’ve had much forward progress since then. Most importantly, we are no longer using the DIY setup on one of my Mac Minis. Working with the Mozilla Telemetry & Data team, we have a system that extracts data from UI Telemetry via Spark, imports the data into Presto-based storage, and allows SQL queries and visualization via Re:dash.

With data accessible via Re:dash, we can use SQL to focus on improving our analyses:

- Track Active users, daily & monthly

- Explore retention & churn

- Look into which features lead to retention

- Calculate user session length & event counts per session

- Use funnel analysis to evaluate A/B experiments

Roberto posted about how we’re using Parquet, Presto and Re:dash to create an SQL based query and visualization system.

April 06, 2016 04:18 AM

March 31, 2016

Highlights:

- APK size reduction for downloadable fonts

- now measuring memory via test_awsy_lite

- tsvgx and tp4m moved to Autophone

APK Size

You can see the size of every build on treeherder using Perfherder.

Here’s how the APK size changed over the quarter, for mozilla-central Android 4.0 API15+ opt builds:

The dramatic decrease in February was caused by bug 1233799, which enabled the download content service and removed fonts from the APK.

For the same period, libxul.so generally increased in size: